Trending News

(NEW PODCAST) False Prophets And Apostles Use Psychic Tactics To Scam The Gullible

(OPINION) In tonight’s segment, we discuss a recent report revealing False Apostles and...

Trending News

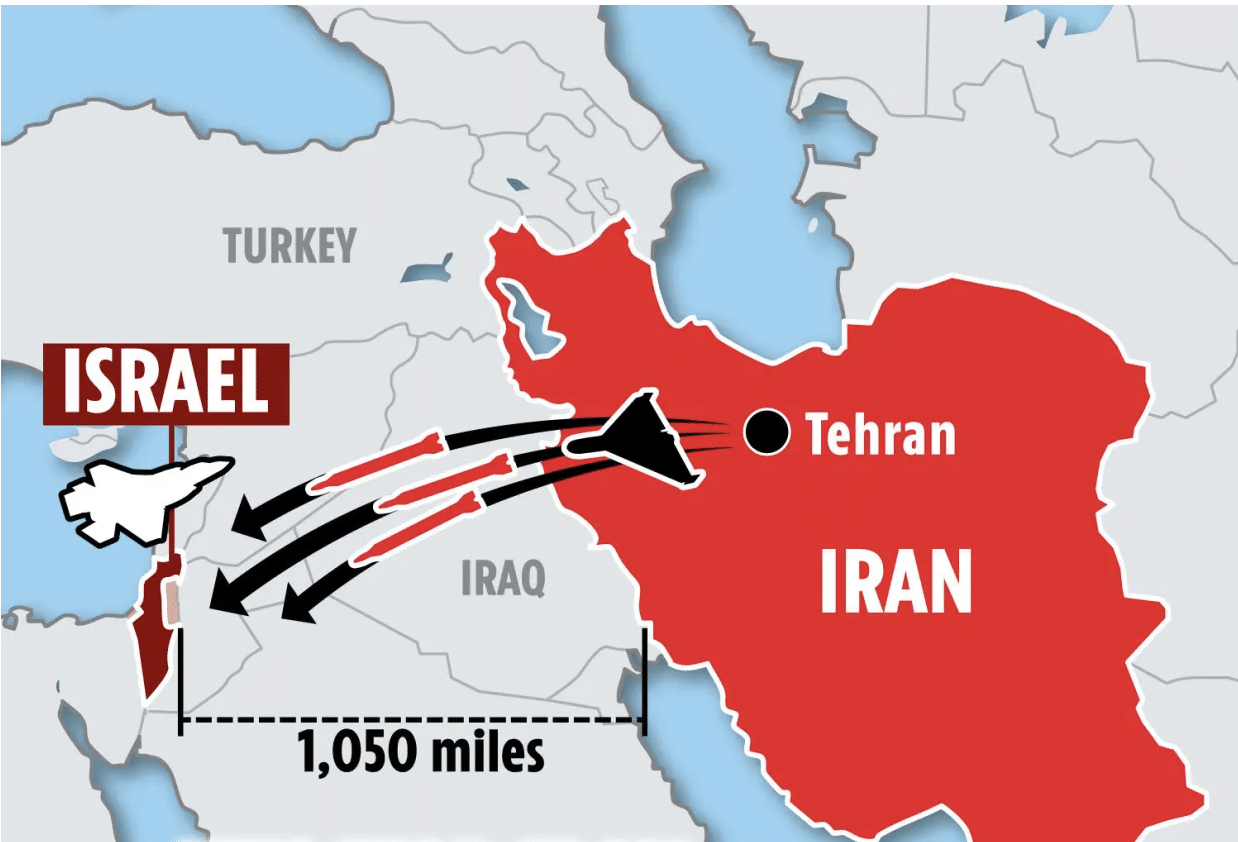

New report revealed Russian weapons helped Iran harden defenses against Israeli airstrikes

Last March, a Russian arms maker invited a delegation of Iranians to a VIP shopping tour of its...

Protests spread as anti-Israel agitators shut down traffic and disrupt cities across US

Traffic on both lanes of California’s Golden Gate Bridge has been shut down Monday, the...

(WATCH) Mysterious object with humanoid characteristics seen descending in skies above Sequoia Park, California..

Twitter (X) User shares what appears to be an unidentified object with humanoid characteristics...

Iran threatens to unleash 1,500 missiles if Israel launches revenge attack

Israel’s response to Iran’s drone and missile barrage may be “imminent”,...

FBI opens criminal investigation into Baltimore bridge collapse

The FBI is conducting a criminal investigation into the deadly collapse of Baltimore’s Francis...

(WATCH) Pastor is kicked off stage for criticizing ‘Strip-Show-Like performance’ at Megachurch event

(OPINION) Controversy erupted at the Stronger Men’s Conference in Springfield, Missouri, organized...

Nebraska deputies catch naked married substitute teacher in car with student, chase ensues that ends in vehicle crashing

married substitute teacher was allegedly caught unclothed in the back of a vehicle with a high...

Pastor Greg Laurie on why Iran’s attack on Israel is a sign of the End Times

(OPINION) Iran’s attack on Israel is a significant biblical sign, said Pastor Greg Laurie of...

Biden to host Iraq’s leader after Iran’s attack on Israel spurs chaos across the Middle East

President Biden is set to host Iraq’s leader this week for talks after Iran’s unprecedented...

Sydney, Australia rocked by second stabbing in days as priest and worshippers targeted at church…

A second stabbing has been captured on camera in Sydney, this time taking place in a church....

(NEW PODCAST) World Holds Its Breath As The Middle East Is A Powder Keg Set To Explode

(OPINION) In this special broadcast, we discuss the recent attacks carried out by Iran against...

Maren Morris defends taking her toddler to a ‘family friendly’ drag show

Last year, country singer Maren Morris made headlines for suggesting Tennessee should...